1

Learn latent bandit models

Introduces identifiable latent bandits, a family of latent bandit algorithms that recover a continuous vector-valued latent state without assuming the latent variable model is known in advance.

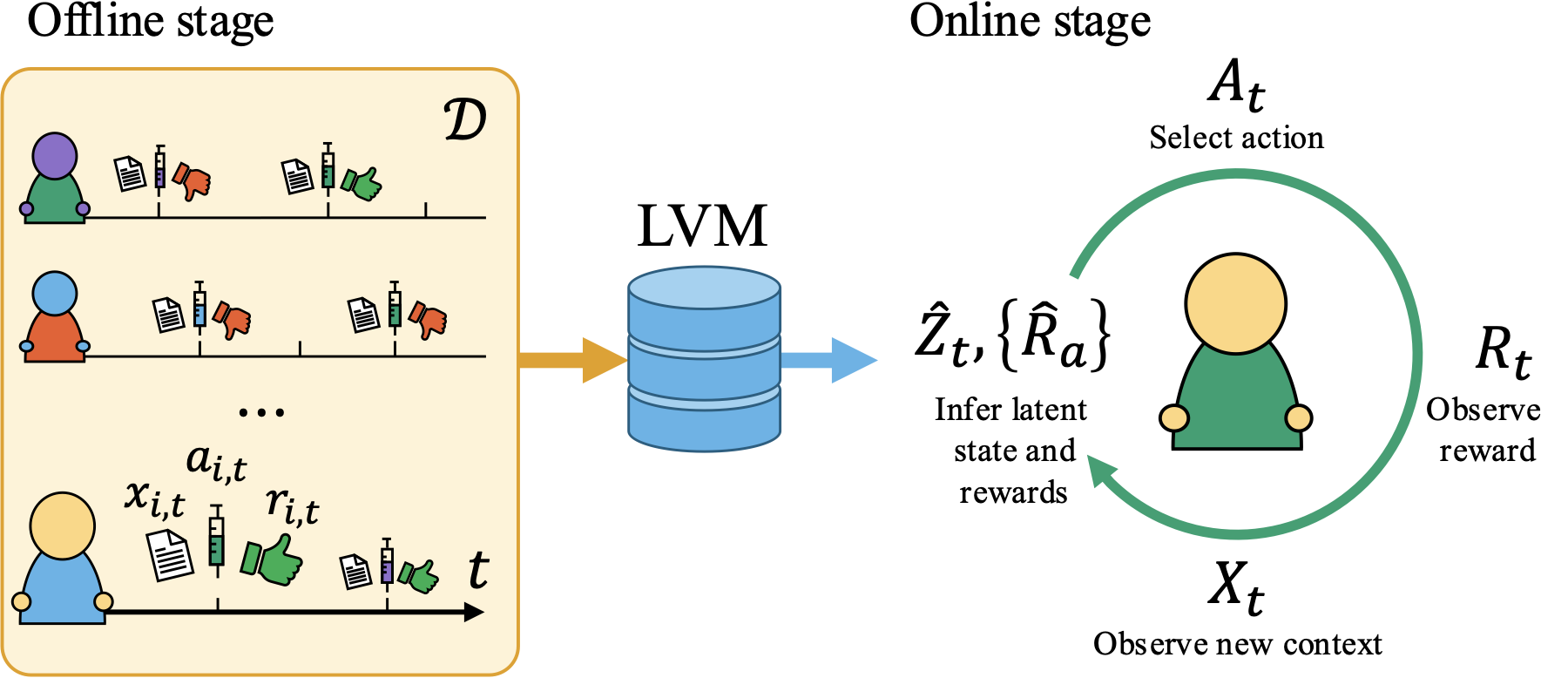

A framework for learning latent bandit models from observational histories, then using them for faster personalized sequential decision-making.

Sequential decision-making algorithms such as multi-armed bandits can find optimal personalized decisions, but are notoriously sample-hungry. In personalized medicine, for example, training a bandit from scratch for every patient is typically infeasible, as the number of trials required is much larger than the number of decision points for a single patient. To combat this, latent bandits offer rapid exploration and personalization beyond what context variables alone can offer, provided that a latent variable model of problem instances can be learned consistently. However, existing works give no guidance as to how such a model can be found.

In this work, we propose an identifiable latent bandit (ILB) framework that leads to optimal decision-making with a shorter exploration time than classical bandits by learning from historical records of decisions and outcomes. Our method is based on nonlinear independent component analysis that provably identifies representations from observational data sufficient to infer optimal actions in new bandit instances. We verify this strategy in simulated and semi-synthetic environments, showing substantial improvement over online and offline learning baselines when identifying conditions are satisfied.

Identifiable latent bandits use observational histories from previous instances to learn the hidden structure shared across individuals. The learned representation is then used online to infer a new instance's latent state from repeated contexts and choose actions with less exploration.

1

Introduces identifiable latent bandits, a family of latent bandit algorithms that recover a continuous vector-valued latent state without assuming the latent variable model is known in advance.

2

Builds on nonlinear ICA and introduces mean-contrastive learning to identify the latent variable model from observational histories.

3

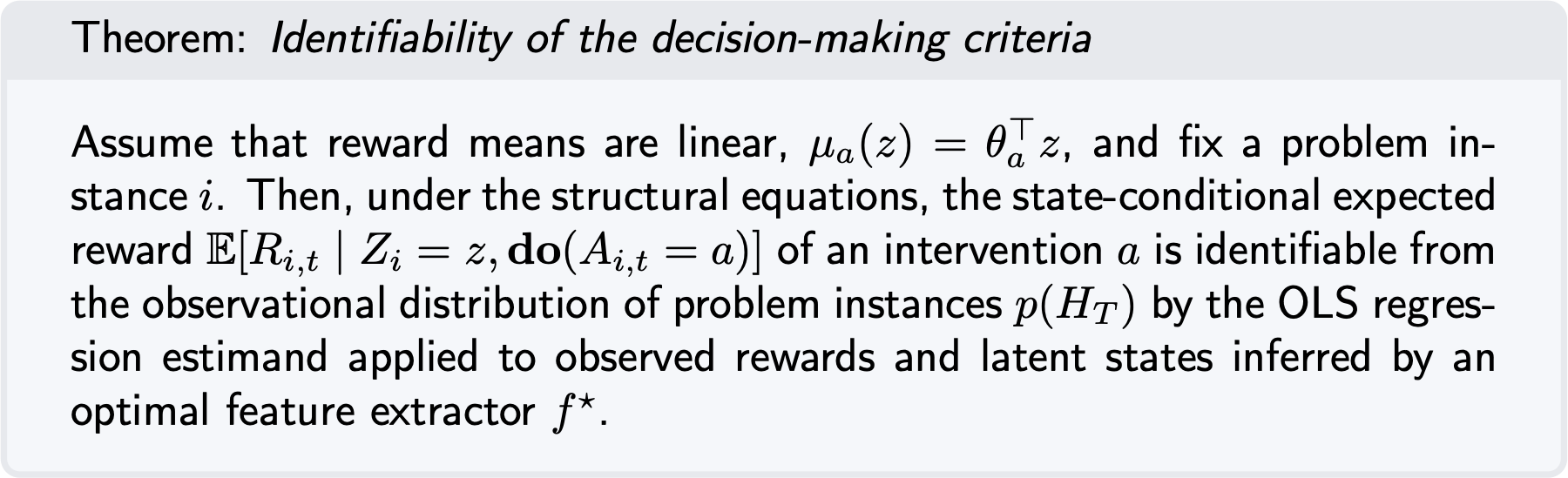

Proves that the learned representation is identifiable to the degree needed for optimal decision-making, then uses it in sequential regret-minimization algorithms.

4

Shows that, when identifying conditions hold, the algorithms improve over online bandits and offline regression baselines in synthetic and semi-synthetic treatment environments.

For many chronic diseases, treatment is individualized and sequential: patients go through a long process of trying different options over time:

How can we use observational data to shorten treatment times?

Why latent bandits?

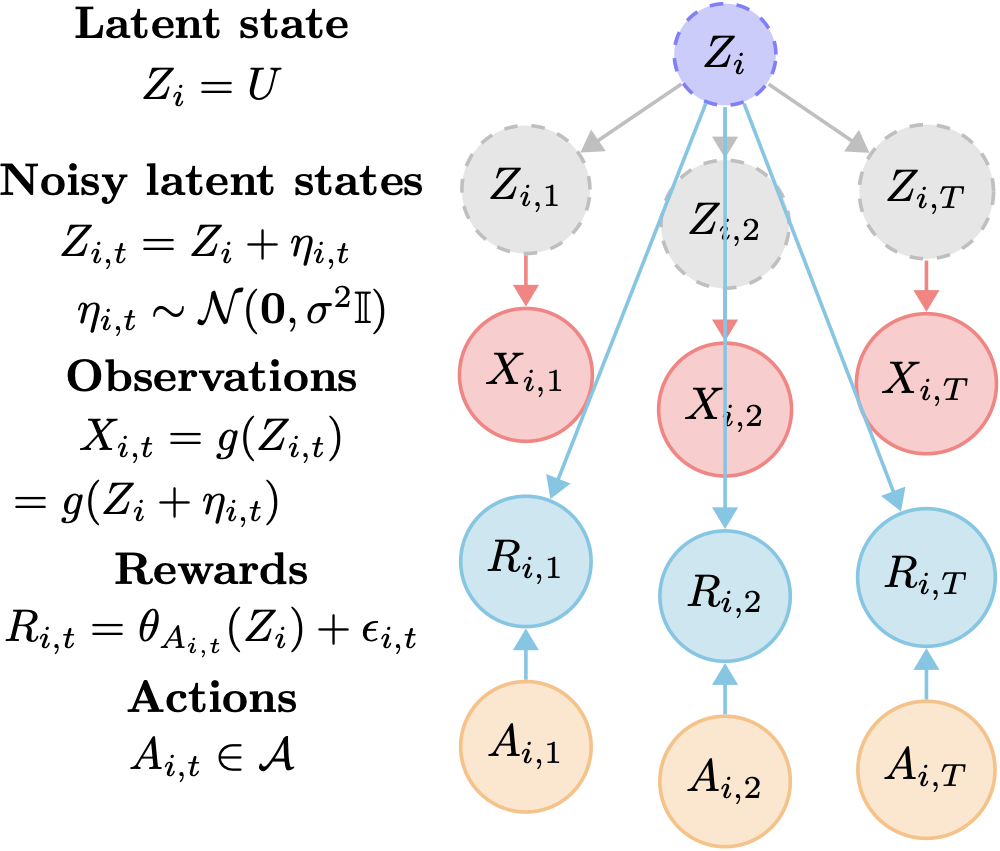

A single observed context can be noisy and incomplete. The optimal action may depend on a stable latent state that only becomes clear across repeated observations.

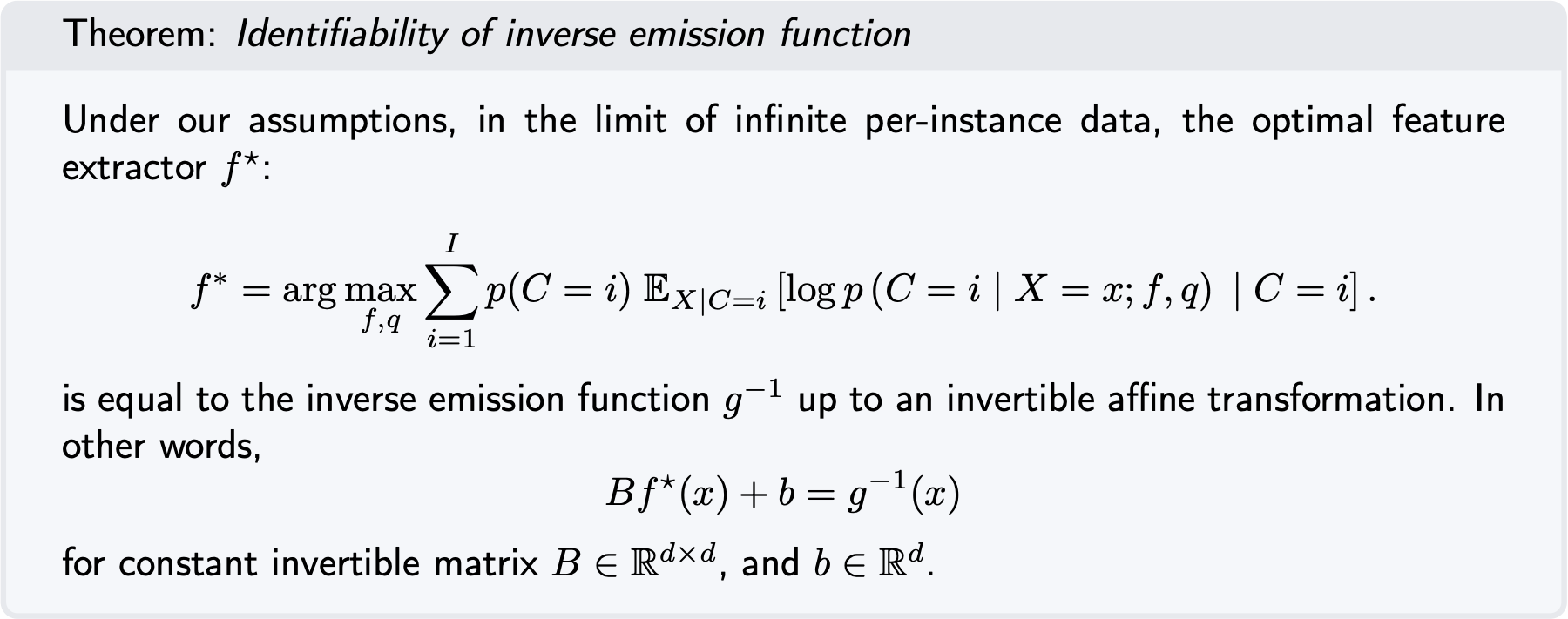

Why identifiability?

A learned latent model must recover the structure needed for decisions, not just reconstruct observations. The paper gives conditions under which this recovery is possible.

Why observational data?

Historical decisions and outcomes can warm-start personalization, reducing the amount of online exploration required for a new instance.

The offline stage learns an inverse emission model. Contexts are generated by a smooth injective emission function applied to a noisy latent state. We train a feature extractor f with a mean-contrastive objective: given a context observation, predict which historical instance generated it.

Once this feature extractor is learned, repeated contexts from an instance can be averaged in representation space to estimate the latent state. A reward model is then fit from inferred latent states and observed rewards, giving action-value estimates for online decision-making.

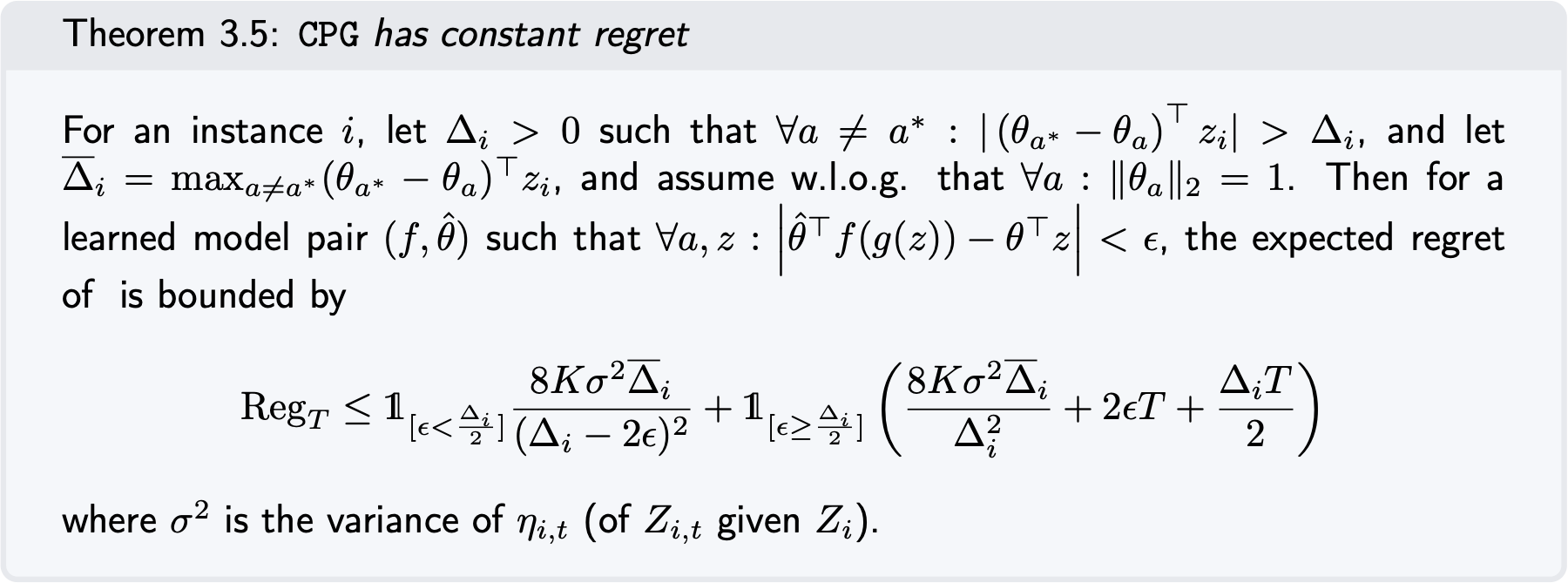

The paper proves that the learned representation is identifiable up to the affine transformations that still preserve the action rankings needed for optimal decisions. It then shows that the corresponding reward model is identifiable from observational data, and that context posterior greedy has constant regret when the learned model is accurate enough.

CPG

Uses the average learned representation of observed contexts to estimate the latent state, then acts greedily under the offline reward model.

FPG

Refines the latent-state estimate using both context history and observed rewards, making it more adaptive when the representation is biased.

FPG-TS

Samples reward means under the posterior to trade off fast personalization with recovery from uncertainty or misspecification.

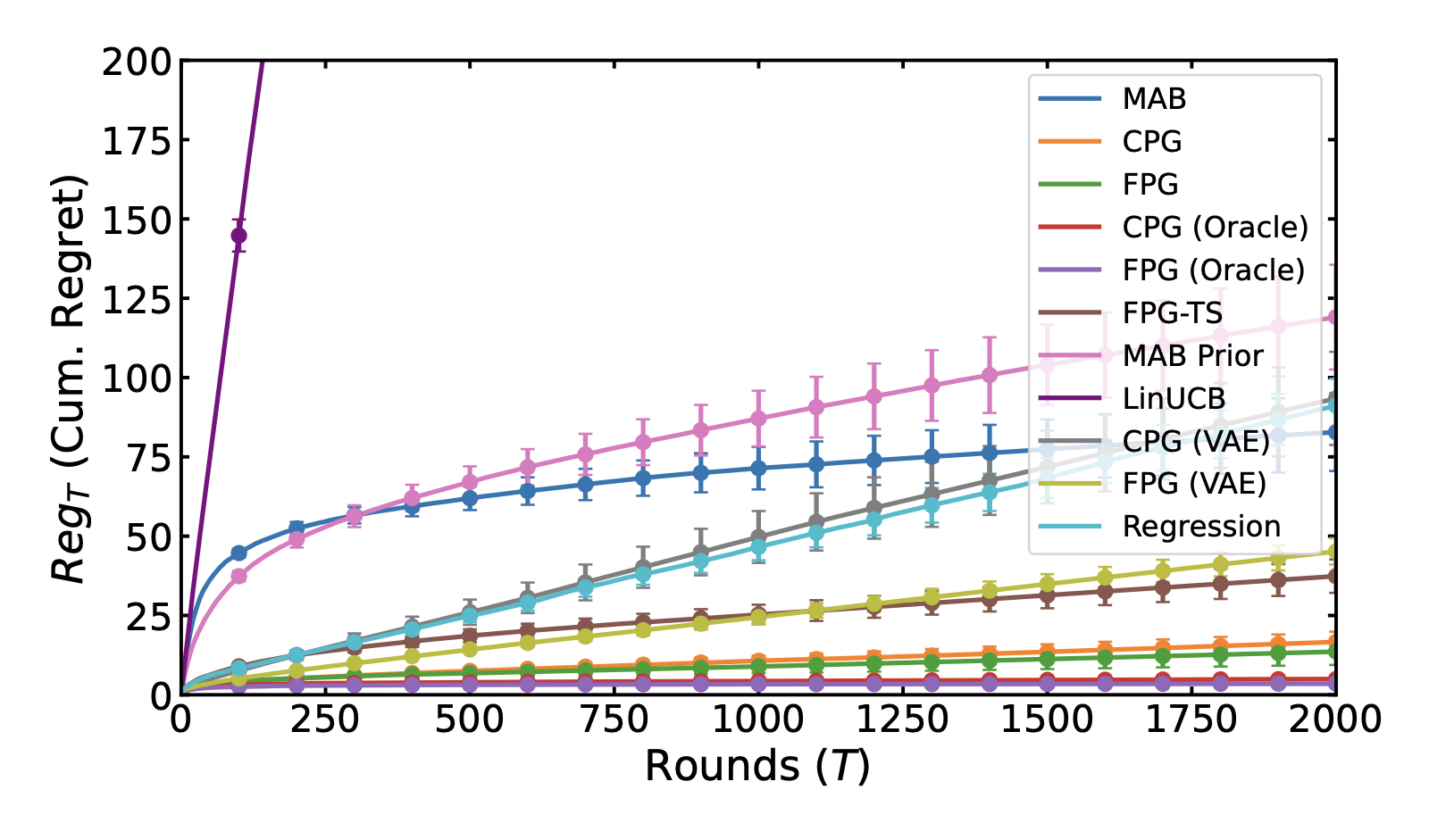

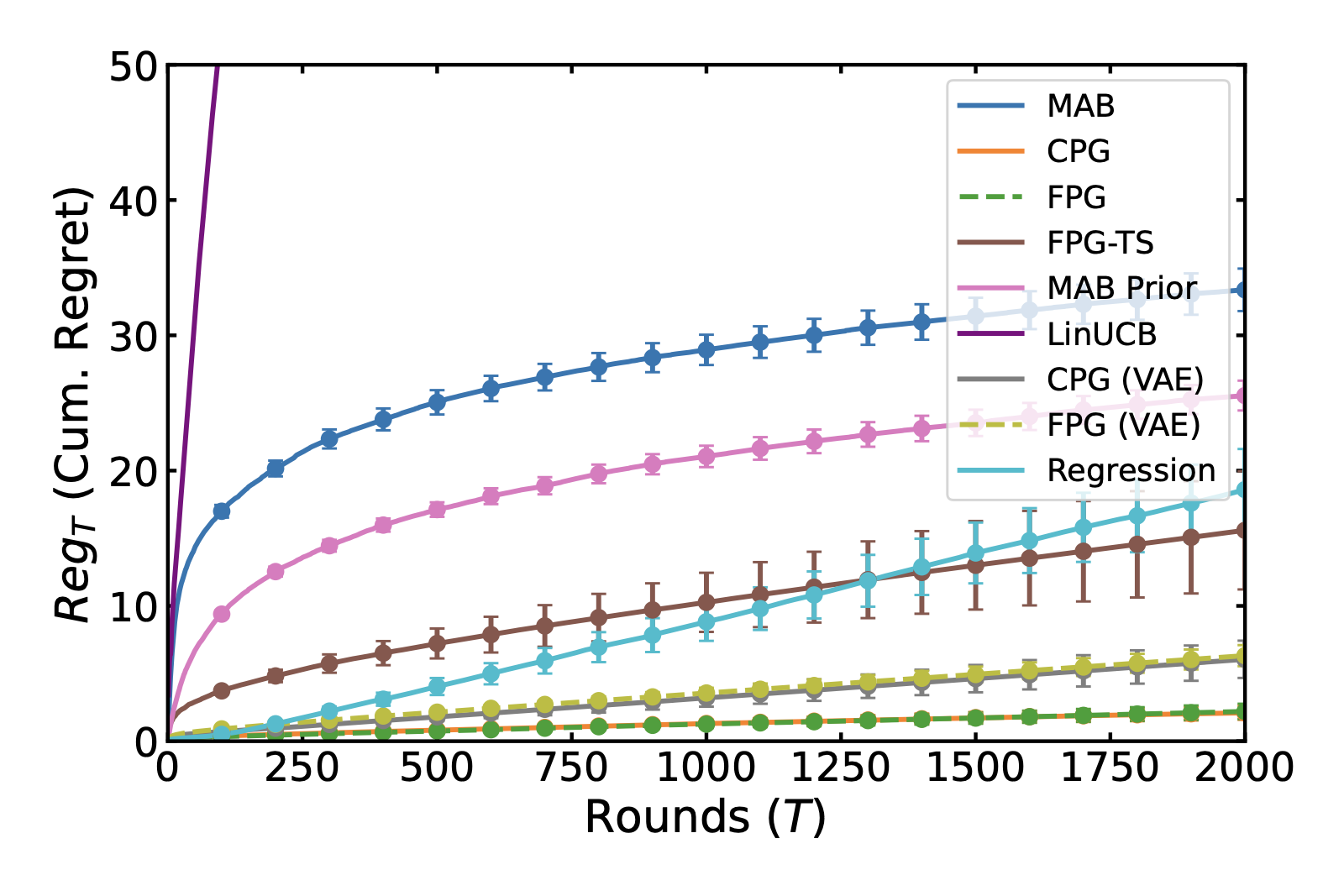

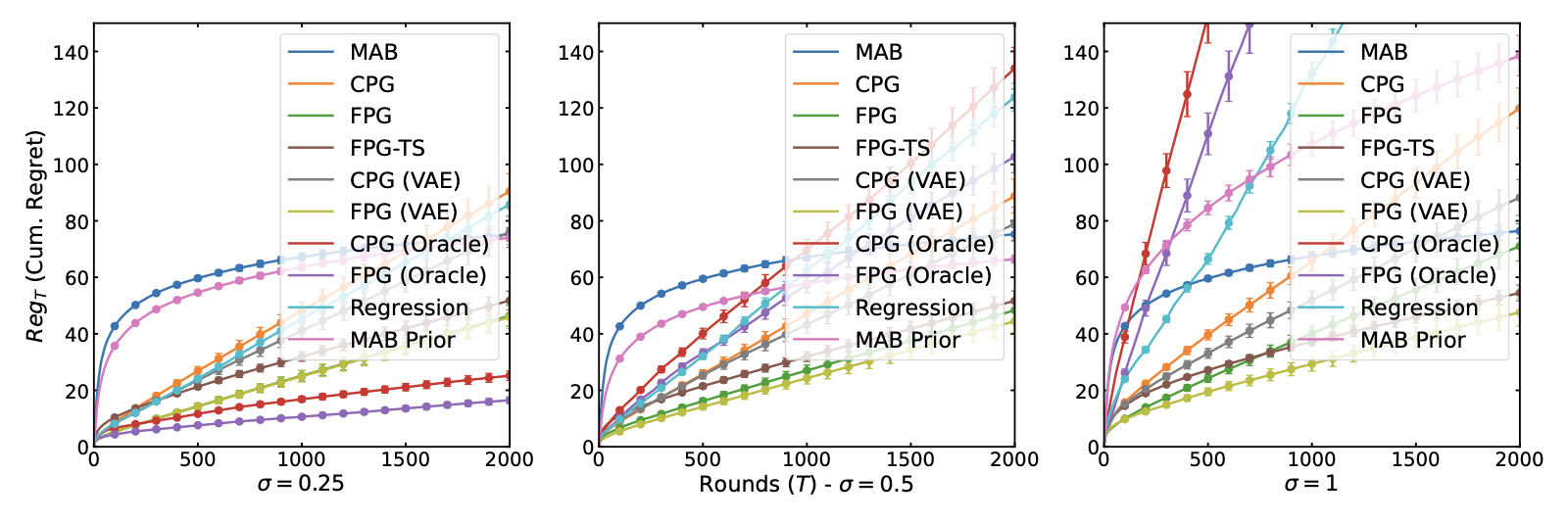

In synthetic and semi-synthetic Alzheimer's disease treatment environments, identifiable latent bandits converge faster than fully online bandits and avoid much of the bias seen in direct regression baselines when the identifying assumptions hold.

The experiments also map the limitations: as latent-state noise, context noise, out-of-distribution shift, or the number of arms increases, the tradeoff between fast offline transfer and unbiased online exploration becomes more visible.

The central message is that latent bandits do not need to assume their latent variable model is known in advance. Under identifiable structure, the model can be learned from observational histories and then used to reduce online exploration for future personalized decisions.

@article{balcioglu2026identifiable,

title = {{Identifiable Latent Bandits}: Leveraging observational data for personalized decision-making},

author = {Balc{\i}o{\u{g}}lu, Ahmet Zahid and Mwai, Newton and Carlsson, Emil and Johansson, Fredrik D.},

journal = {Transactions on Machine Learning Research},

year = {2026},

url = {https://openreview.net/forum?id=SvkZ76wKpu}

}